1,476 posts tagged “datasette”

Datasette is an open source tool for exploring and publishing data.

2026

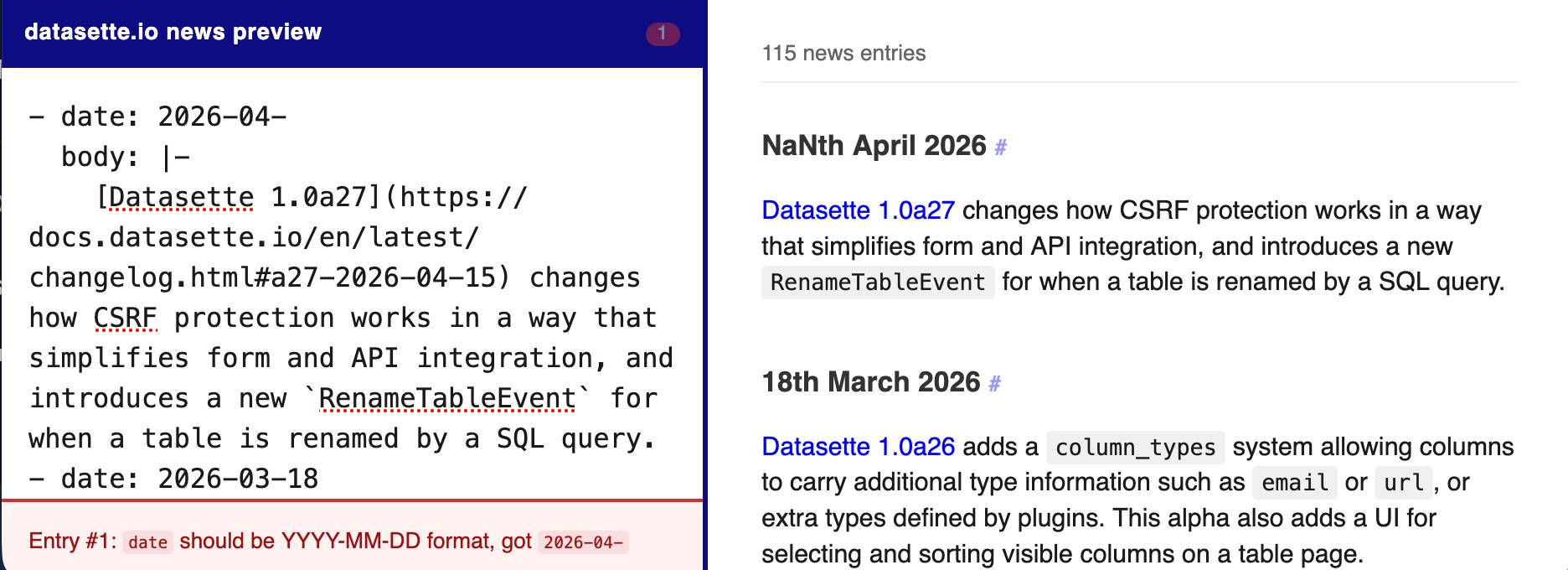

The datasette.io website has a news section built from this news.yaml file in the underlying GitHub repository. The YAML format looks like this:

- date: 2026-04-15

body: |-

[Datasette 1.0a27](https://docs.datasette.io/en/latest/changelog.html#a27-2026-04-15) changes how CSRF protection works in a way that simplifies form and API integration, and introduces a new `RenameTableEvent` for when a table is renamed by a SQL query.

- date: 2026-03-18

body: |-

...

This format is a little hard to edit, so I finally had Claude build a custom preview UI to make checking for errors have slightly less friction.

I built it using standard claude.ai and Claude Artifacts, taking advantage of Claude's ability to clone GitHub repos and look at their content as part of a regular chat:

Clone https://github.com/simonw/datasette.io and look at the news.yaml file and how it is rendered on the homepage. Build an artifact I can paste that YAML into which previews what it will look like, and highlights any markdown errors or YAML errors

This plugin was using the ds_csrftoken cookie as part of a custom signed URL, which needed upgrading now that Datasette 1.0a27 no longer sets that cookie.

Two major changes in this new Datasette alpha. I covered the first of those in detail yesterday - Datasette no longer uses Django-style CSRF form tokens, instead using modern browser headers as described by Filippo Valsorda.

The second big change is that Datasette now fires a new RenameTableEvent any time a table is renamed during a SQLite transaction. This is useful because some plugins (like datasette-comments) attach additional data to table records by name, so a renamed table requires them to react in appropriate ways.

Here are the rest of the changes in the alpha:

- New actor= parameter for

datasette.clientmethods, allowing internal requests to be made as a specific actor. This is particularly useful for writing automated tests. (#2688)- New

Database(is_temp_disk=True)option, used internally for the internal database. This helps resolve intermittent database locked errors caused by the internal database being in-memory as opposed to on-disk. (#2683) (#2684)- The

/<database>/<table>/-/upsertAPI (docs) now rejects rows withnullprimary key values. (#1936)- Improved example in the API explorer for the

/-/upsertendpoint (docs). (#1936)- The

/<database>.jsonendpoint now includes an"ok": truekey, for consistency with other JSON API responses.- call_with_supported_arguments() is now documented as a supported public API. (#2678)

A small update for my tool for helping me figure out what all of the Datasette instances on my laptop are up to.

- Show working directory derived from each PID

- Show the full path to each database file

Output now looks like this:

http://127.0.0.1:8007/ - v1.0a26

Directory: /Users/simon/dev/blog

Databases:

simonwillisonblog: /Users/simon/dev/blog/simonwillisonblog.db

Plugins:

datasette-llm

datasette-secrets

http://127.0.0.1:8001/ - v1.0a26

Directory: /Users/simon/dev/creatures

Databases:

creatures: /tmp/creatures.db

datasette PR #2689: Replace token-based CSRF with Sec-Fetch-Site header protection.

Datasette has long protected against CSRF attacks using CSRF tokens, implemented using my asgi-csrf Python library. These are something of a pain to work with - you need to scatter forms in templates with <input type="hidden" name="csrftoken" value="{{ csrftoken() }}"> lines and then selectively disable CSRF protection for APIs that are intended to be called from outside the browser.

I've been following Filippo Valsorda's research here with interest, described in this detailed essay from August 2025 and shipped as part of Go 1.25 that same month.

I've now landed the same change in Datasette. Here's the PR description - Claude Code did much of the work (across 10 commits, closely guided by me and cross-reviewed by GPT-5.4) but I've decided to start writing these PR descriptions by hand, partly to make them more concise and also as an exercise in keeping myself honest.

- New CSRF protection middleware inspired by Go 1.25 and this research by Filippo Valsorda. This replaces the old CSRF token based protection.

- Removes all instances of

<input type="hidden" name="csrftoken" value="{{ csrftoken() }}">in the templates - they are no longer needed.- Removes the

def skip_csrf(datasette, scope):plugin hook defined indatasette/hookspecs.pyand its documentation and tests.- Updated CSRF protection documentation to describe the new approach.

- Upgrade guide now describes the CSRF change.

- No longer requires Datasette - running

uvx datasette-portsnow works as well.- Installing it as a Datasette plugin continues to provide the

datasette portscommand.

Another example of README-driven development, this time solving a problem that might be unique to me.

I often find myself running a bunch of different Datasette instances with different databases and different in-development plugins, spreads across dozens of different terminal windows - enough that I frequently lose them!

Now I can run this:

datasette install datasette-ports

datasette ports

And get a list of every running instance that looks something like this:

http://127.0.0.1:8333/ - v1.0a26

Databases: data

Plugins: datasette-enrichments, datasette-enrichments-llm, datasette-llm, datasette-secrets

http://127.0.0.1:8001/ - v1.0a26

Databases: creatures

Plugins: datasette-extract, datasette-llm, datasette-secrets

http://127.0.0.1:8900/ - v0.65.2

Databases: logs

- The same model ID no longer needs to be repeated in both the default model and allowed models lists - setting it as a default model automatically adds it to the allowed models list. #6

- Improved documentation for Python API usage.

- The

actorwho triggers an enrichment is now passed to thellm.mode(... actor=actor)method. #3

- Now uses datasette-llm to manage model configuration, which means you can control which models are available for extraction tasks using the

extractpurpose and LLM model configuration. #38

- This plugin now uses datasette-llm to configure and manage models. This means it's possible to specify which models should be made available for enrichments, using the new

enrichmentspurpose.

- Removed features relating to allowances and estimated pricing. These are now the domain of datasette-llm-accountant.

- Now depends on datasette-llm for model configuration. #3

- Full prompts and responses and tool calls can now be logged to the

llm_usage_prompt_logtable in the internal database if you set the newdatasette-llm-usage.log_promptsplugin configuration setting.- Redesigned the

/-/llm-usage-simple-promptpage, which now requires thellm-usage-simple-promptpermission.

- The

llm_prompt_context()plugin hook wrapper mechanism now tracks prompts executed within a chain as well as one-off prompts, which means it can be used to track tool call loops. #5

- Ability to configure different API keys for models based on their purpose - for example, set it up so enrichments always use

gpt-5.4-miniwith an API key dedicated to that purpose. #4

I released llm-echo 0.3 to provide an API key testing utility I needed for the tests for this new feature.

I'm working on integrating datasette-files into other plugins, such as datasette-extract. This necessitated a new release of the base plugin.

owners_can_editandowners_can_deleteconfiguration options, plus thefiles-editandfiles-deleteactions are now scoped to a newFileResourcewhich is a child ofFileSourceResource. #18- The file picker UI is now available as a

<datasette-file-picker>Web Component. Thanks, Alex Garcia. #19- New

from datasette_files import get_filePython API for other plugins that need to access file data. #20

Adds the ability to configure which LLMs are available for which purpose, which means you can restrict the list of models that can be used with a specific plugin. #3

actoris now available to thellm_prompt_contextplugin hook. #2

A backend for datasette-files that adds the ability to store and retrieve files using an S3 bucket. This release added a mechanism for fetching S3 configuration periodically from a URL, which means we can use time limited IAM credentials that are restricted to a prefix within a bucket.

New release of the base plugin that makes models from LLM available for use by other Datasette plugins such as datasette-enrichments-llm.

- New

register_llm_purposes()plugin hook andget_purposes()function for retrieving registered purpose strings. #1

One of the responsibilities of this plugin is to configure which models are used for which purposes, so you can say in one place "data enrichment uses GPT-5.4-nano but SQL query assistance happens using Sonnet 4.6", for example.

Plugins that depend on this can use model = await llm.model(purpose="enrichment") to indicate the purpose of the prompts they wish to execute against the model. Those plugins can now also use the new register_llm_purposes() hook to register those purpose strings, which means future plugins can list those purposes in one place to power things like an admin UI for assigning models to purposes.

The most interesting alpha of datasette-files yet, a new plugin which adds the ability to upload files directly into a Datasette instance. Here are the release notes in full:

- Columns are now configured using the new column_types system from Datasette 1.0a26. #8

- New

file_actionsplugin hook, plus ability to import an uploaded CSV/TSV file to a table. #10- UI for uploading multiple files at once via the new documented JSON upload API. #11

- Thumbnails are now generated for image files and stored in an internal

datasette_files_thumbnailstable. #13

Coding agents for data analysis. Here's the handout I prepared for my NICAR 2026 workshop "Coding agents for data analysis" - a three hour session aimed at data journalists demonstrating ways that tools like Claude Code and OpenAI Codex can be used to explore, analyze and clean data.

Here's the table of contents:

I ran the workshop using GitHub Codespaces and OpenAI Codex, since it was easy (and inexpensive) to distribute a budget-restricted API key for Codex that attendees could use during the class. Participants ended up burning $23 of Codex tokens.

The exercises all used Python and SQLite and some of them used Datasette.

One highlight of the workshop was when we started running Datasette such that it served static content from a viz/ folder, then had Claude Code start vibe coding new interactive visualizations directly in that folder. Here's a heat map it created for my trees database using Leaflet and Leaflet.heat, source code here.

I designed the handout to also be useful for people who weren't able to attend the session in person. As is usually the case, material aimed at data journalists is equally applicable to anyone else with data to explore.

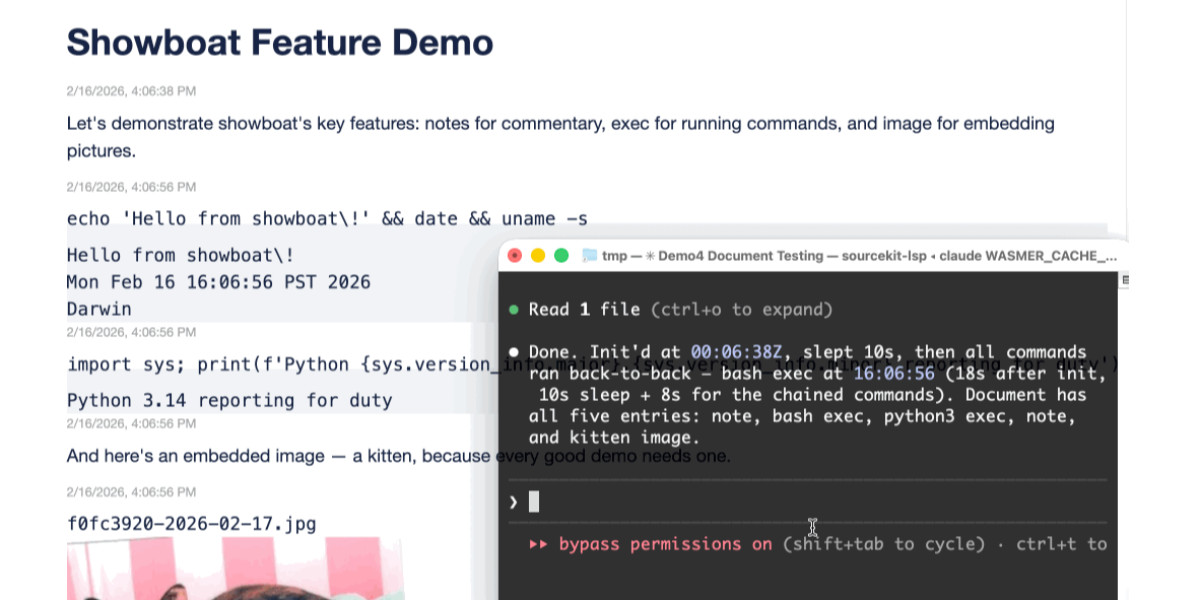

Two new Showboat tools: Chartroom and datasette-showboat

I introduced Showboat a week ago—my CLI tool that helps coding agents create Markdown documents that demonstrate the code that they have created. I’ve been finding new ways to use it on a daily basis, and I’ve just released two new tools to help get the best out of the Showboat pattern. Chartroom is a CLI charting tool that works well with Showboat, and datasette-showboat lets Showboat’s new remote publishing feature incrementally push documents to a Datasette instance.

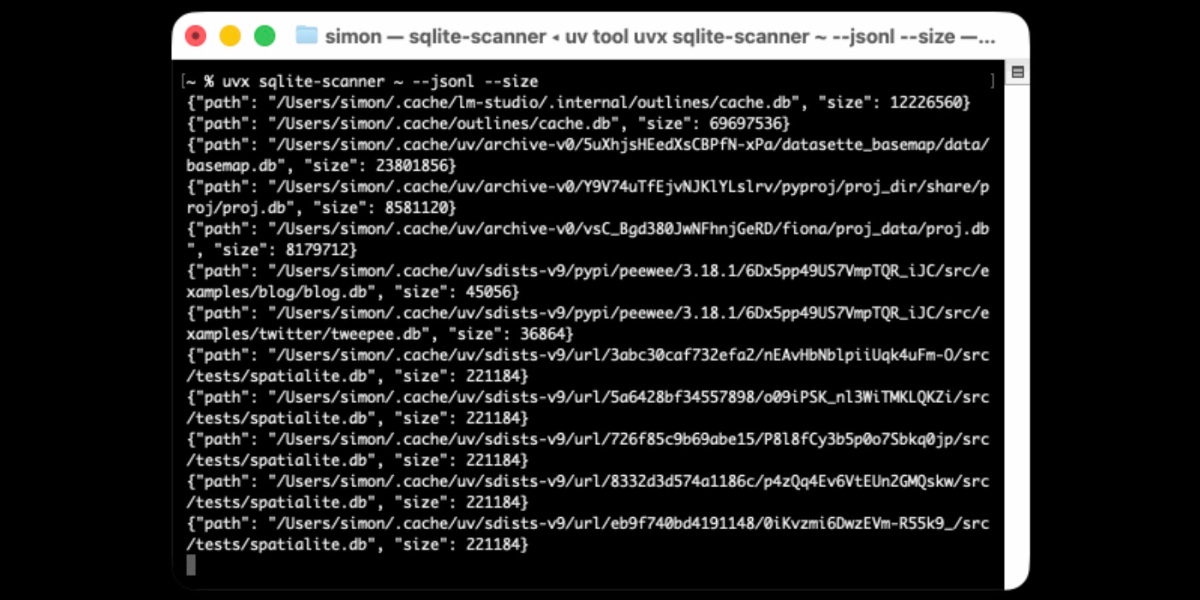

[... 1,756 words]Distributing Go binaries like sqlite-scanner through PyPI using go-to-wheel

I’ve been exploring Go for building small, fast and self-contained binary applications recently. I’m enjoying how there’s generally one obvious way to do things and the resulting code is boring and readable—and something that LLMs are very competent at writing. The one catch is distribution, but it turns out publishing Go binaries to PyPI means any Go binary can be just a uvx package-name call away.