Elsewhere

Release TIL Research Tool Museum

Filters: Sorted by date

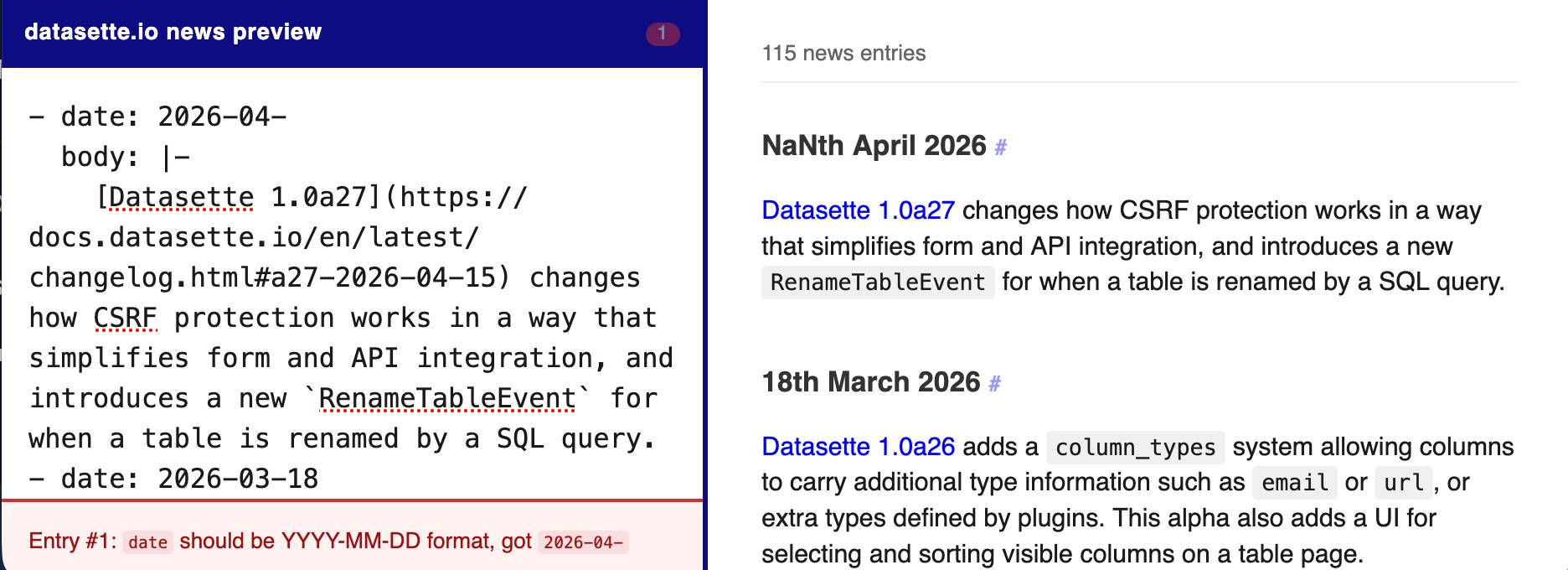

The datasette.io website has a news section built from this news.yaml file in the underlying GitHub repository. The YAML format looks like this:

- date: 2026-04-15

body: |-

[Datasette 1.0a27](https://docs.datasette.io/en/latest/changelog.html#a27-2026-04-15) changes how CSRF protection works in a way that simplifies form and API integration, and introduces a new `RenameTableEvent` for when a table is renamed by a SQL query.

- date: 2026-03-18

body: |-

...

This format is a little hard to edit, so I finally had Claude build a custom preview UI to make checking for errors have slightly less friction.

I built it using standard claude.ai and Claude Artifacts, taking advantage of Claude's ability to clone GitHub repos and look at their content as part of a regular chat:

Clone https://github.com/simonw/datasette.io and look at the news.yaml file and how it is rendered on the homepage. Build an artifact I can paste that YAML into which previews what it will look like, and highlights any markdown errors or YAML errors

This plugin was using the ds_csrftoken cookie as part of a custom signed URL, which needed upgrading now that Datasette 1.0a27 no longer sets that cookie.

Two major changes in this new Datasette alpha. I covered the first of those in detail yesterday - Datasette no longer uses Django-style CSRF form tokens, instead using modern browser headers as described by Filippo Valsorda.

The second big change is that Datasette now fires a new RenameTableEvent any time a table is renamed during a SQLite transaction. This is useful because some plugins (like datasette-comments) attach additional data to table records by name, so a renamed table requires them to react in appropriate ways.

Here are the rest of the changes in the alpha:

- New actor= parameter for

datasette.clientmethods, allowing internal requests to be made as a specific actor. This is particularly useful for writing automated tests. (#2688)- New

Database(is_temp_disk=True)option, used internally for the internal database. This helps resolve intermittent database locked errors caused by the internal database being in-memory as opposed to on-disk. (#2683) (#2684)- The

/<database>/<table>/-/upsertAPI (docs) now rejects rows withnullprimary key values. (#1936)- Improved example in the API explorer for the

/-/upsertendpoint (docs). (#1936)- The

/<database>.jsonendpoint now includes an"ok": truekey, for consistency with other JSON API responses.- call_with_supported_arguments() is now documented as a supported public API. (#2678)

See my notes on Google's new Gemini 3.1 Flash TTS text-to-speech model.

A small update for my tool for helping me figure out what all of the Datasette instances on my laptop are up to.

- Show working directory derived from each PID

- Show the full path to each database file

Output now looks like this:

http://127.0.0.1:8007/ - v1.0a26

Directory: /Users/simon/dev/blog

Databases:

simonwillisonblog: /Users/simon/dev/blog/simonwillisonblog.db

Plugins:

datasette-llm

datasette-secrets

http://127.0.0.1:8001/ - v1.0a26

Directory: /Users/simon/dev/creatures

Databases:

creatures: /tmp/creatures.db

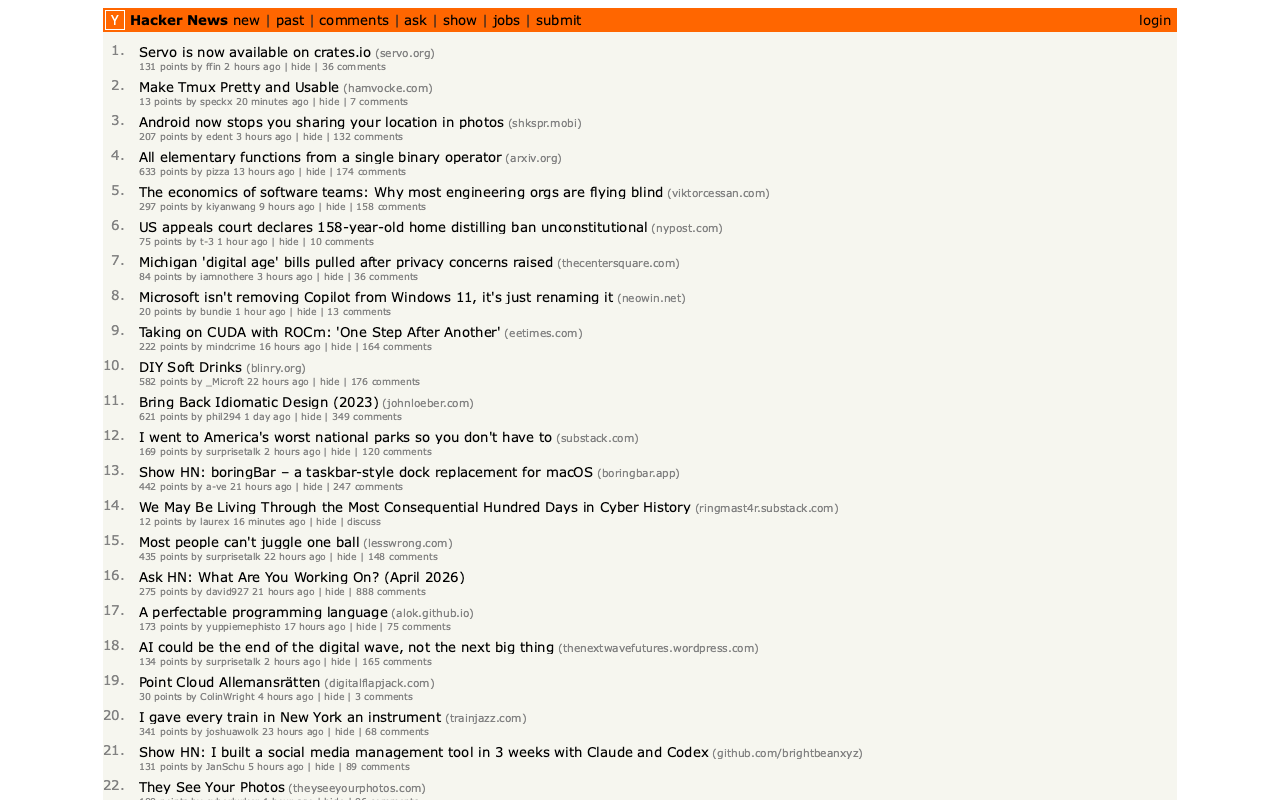

In Servo is now available on crates.io the Servo team announced the initial release of the servo crate, which packages their browser engine as an embeddable library.

I set Claude Code for web the task of figuring out what it can do, building a CLI tool for taking screenshots using it and working out if it could be compiled to WebAssembly.

The servo-shot Rust tool it built works pretty well:

git clone https://github.com/simonw/research

cd research/servo-crate-exploration/servo-shot

cargo build

./target/debug/servo-shot https://news.ycombinator.com/

Here's the result:

Compiling Servo itself to WebAssembly is not feasible due to its heavy use of threads and dependencies like SpiderMonkey, but Claude did build me this playground page for trying out a WebAssembly build of the html5ever and markup5ever_rcdom crates, providing a tool for turning fragments of HTML into a parse tree.

See my notes on SQLite 3.53.0. This playground provides a UI for trying out the various rendering options for SQL result tables from the new Query Result Formatter library, compiled to WebAssembly.

GitHub doesn't tell you the repo size in the UI, but it's available in the CORS-friendly API. Paste a repo into this tool to see the size, for example for simonw/datasette (8.1MB).

I ran into trouble deploying a new feature using SSE to a production Datasette instance, and it turned out that instance was using datasette-gzip which uses asgi-gzip which was incorrectly compressing event/text-stream responses.

asgi-gzip was extracted from Starlette, and has a GitHub Actions scheduled workflow to check Starlette for updates that need to be ported to the library... but that action had stopped running and hence had missed Starlette's own fix for this issue.

I ran the workflow and integrated the new fix, and now datasette-gzip and asgi-gzip both correctly handle text/event-stream in SSE responses.

Inspired by this conversation on Hacker News about whether two SQLite processes in separate Docker containers that share the same volume might run into problems due to WAL shared memory. The answer is that everything works fine - Docker containers on the same host and filesystem share the same shared memory in a way that allows WAL to collaborate as it should.

- No longer requires Datasette - running

uvx datasette-portsnow works as well.- Installing it as a Datasette plugin continues to provide the

datasette portscommand.

- New

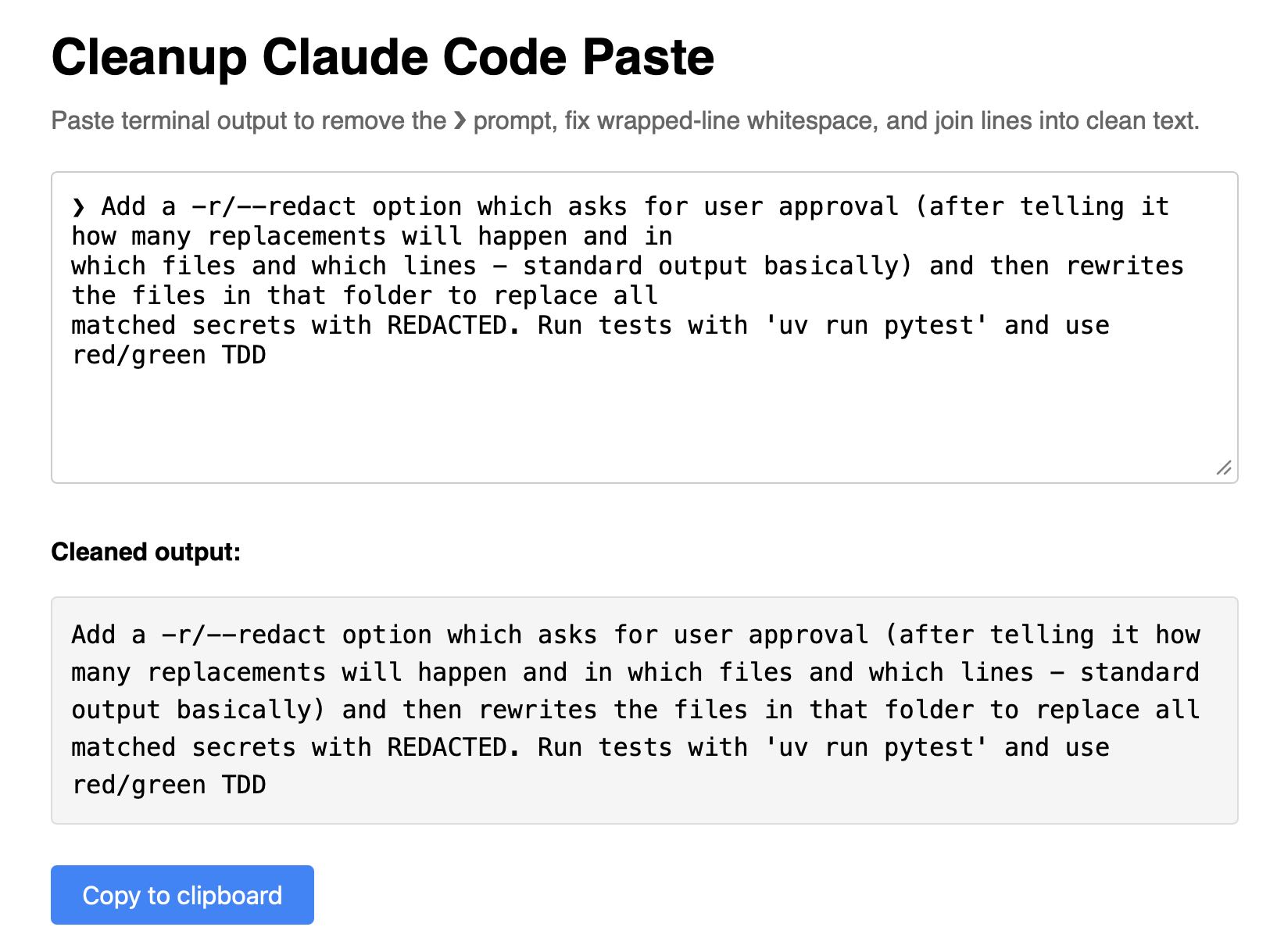

-r/--redactoption which shows the list of matches, asks for confirmation and then replaces every match withREDACTED, taking escaping rules into account.- New Python function

redact_file(file_path: str | Path, secrets: list[str], replacement: str = "REDACTED") -> int.

Super-niche tool this. I sometimes copy prompts out of the Claude Code terminal app and they come out with a bunch of weird additional whitespace. This tool cleans that up.

Another example of README-driven development, this time solving a problem that might be unique to me.

I often find myself running a bunch of different Datasette instances with different databases and different in-development plugins, spreads across dozens of different terminal windows - enough that I frequently lose them!

Now I can run this:

datasette install datasette-ports

datasette ports

And get a list of every running instance that looks something like this:

http://127.0.0.1:8333/ - v1.0a26

Databases: data

Plugins: datasette-enrichments, datasette-enrichments-llm, datasette-llm, datasette-secrets

http://127.0.0.1:8001/ - v1.0a26

Databases: creatures

Plugins: datasette-extract, datasette-llm, datasette-secrets

http://127.0.0.1:8900/ - v0.65.2

Databases: logs

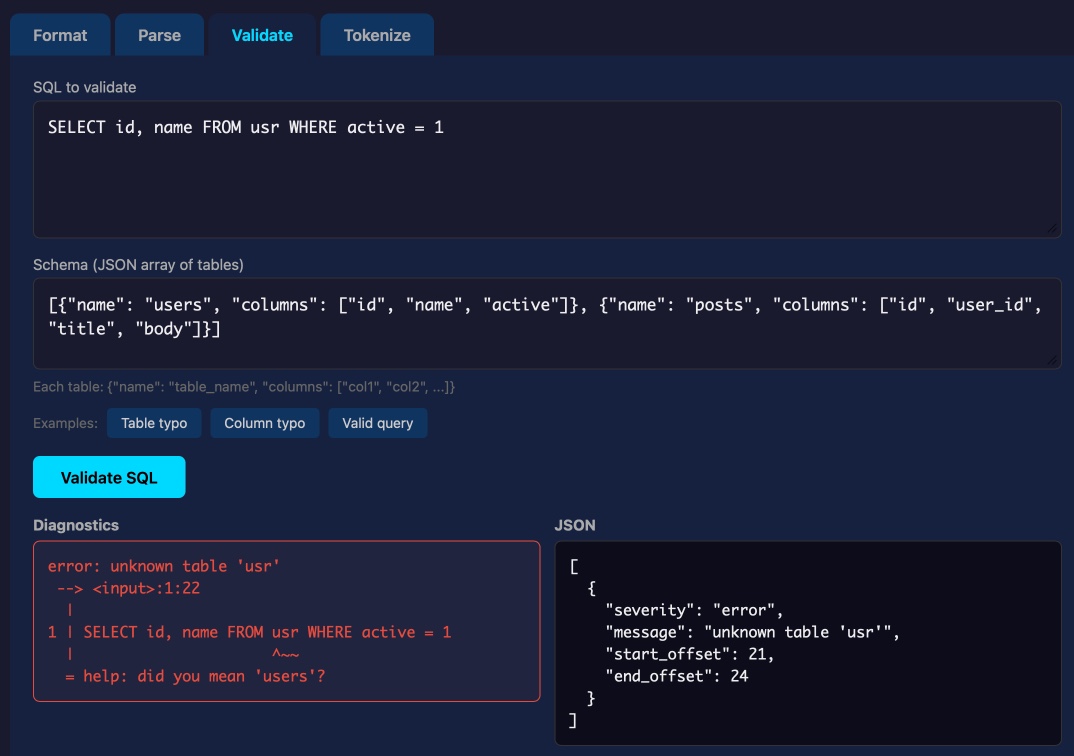

Lalit Maganti's syntaqlite is currently being discussed on Hacker News thanks to Eight years of wanting, three months of building with AI, a deep dive into how it was built.

This inspired me to revisit a research project I ran when Lalit first released it a couple of weeks ago, where I tried it out and then compiled it to a WebAssembly wheel so it could run in Pyodide in a browser (the library itself uses C and Rust).

This new playground loads up the Python library and provides a UI for trying out its different features: formating, parsing into an AST, validating, and tokenizing SQLite SQL queries.

Update: not sure how I missed this but syntaqlite has its own WebAssembly playground linked to from the README.

- CLI tool now streams results as they are found rather than waiting until the end, which is better for large directories.

-d/--directoryoption can now be used multiple times to scan multiple directories.- New

-f/--fileoption for specifying one or more individual files to scan. - New

scan_directory_iter(),scan_file()andscan_file_iter()Python API functions. - New

-v/--verboseoption which shows each directory that is being scanned.

- Added documentation of the escaping schemes that are also scanned.

- Removed unnecessary

represcaping scheme, which was already covered byjson.

I like publishing transcripts of local Claude Code sessions using my claude-code-transcripts tool but I'm often paranoid that one of my API keys or similar secrets might inadvertently be revealed in the detailed log files.

I built this new Python scanning tool to help reassure me. You can feed it secrets and have it scan for them in a specified directory:

uvx scan-for-secrets $OPENAI_API_KEY -d logs-to-publish/

If you leave off the -d it defaults to the current directory.

It doesn't just scan for the literal secrets - it also scans for common encodings of those secrets e.g. backslash or JSON escaping, as described in the README.

If you have a set of secrets you always want to protect you can list commands to echo them in a ~/.scan-for-secrets.conf.sh file. Mine looks like this:

llm keys get openai

llm keys get anthropic

llm keys get gemini

llm keys get mistral

awk -F= '/aws_secret_access_key/{print $2}' ~/.aws/credentials | xargs

I built this tool using README-driven-development: I carefully constructed the README describing exactly how the tool should work, then dumped it into Claude Code and told it to build the actual tool (using red/green TDD, naturally.)

I'm working on a major change to my LLM Python library and CLI tool. LLM provides an abstraction layer over hundreds of different LLMs from dozens of different vendors thanks to its plugin system, and some of those vendors have grown new features over the past year which LLM's abstraction layer can't handle, such as server-side tool execution.

To help design that new abstraction layer I had Claude Code read through the Python client libraries for Anthropic, OpenAI, Gemini and Mistral and use those to help craft curl commands to access the raw JSON for both streaming and non-streaming modes across a range of different scenarios. Both the scripts and the captured outputs now live in this new repo.

In trying to build my own version of Claude Artifacts I got curious about options for applying CSP headers to content in sandboxed iframes without using a separate domain to host the files. Turns out you can inject <meta http-equiv="Content-Security-Policy"...> tags at the top of the iframe content and they'll be obeyed even if subsequent untrusted JavaScript tries to manipulate them.

New models gemini-3.1-flash-lite-preview, gemma-4-26b-a4b-it and gemma-4-31b-it. See my notes on Gemma 4.